Recently at the Brandon Hall Group Excellence Conference in West Palm Beach, I opened my session with two numbers that got everyone talking: 92% and 21%.

Ninety-two percent of organizations say they are “doing AI.”

Only 21% are successfully scaling it.

Almost everyone is experimenting, but few are fundamentally changing how work actually happens. We are ‘accessorizing’ old workflows with new tools, then wondering why the business hasn’t fundamentally shifted.

It’s not a tech problem — it’s an organizational design decision

High-energy pilots and early wins are exciting, but they often hit the “messy middle” where momentum fades. Our recent research across 700 global organizations shows that AI leaders aren’t winning because they have better algorithms — they see 14.7x higher workforce productivity because the CIO and CHRO are radically aligned.

AI leaders succeed not because of the model, but because they redesigned ownership before deployment.

Fear is not resistance — it’s information

In our survey, we learned 70% of employees worry about being replaced, while 85% of AI leaders say willingness to learn now matters more than past experience.

That gap is a signal. Expressed fear isn’t opposition — it’s a sign that people don’t understand how expectations, incentives, and performance measurement are evolving. When tools change but rewards don’t, hesitation is survival.

If we scale intelligence without scaling clarity, trust, and human capability, we amplify anxiety instead of performance.

AI expands what’s possible. Humans determine what’s responsible. Alignment determines what actually scales.

The sequencing trap: Skills vs. AI strategy

I love skills. A skills-centered approach is key to agility. But here’s the hard truth: a skills taxonomy is outdated almost immediately. Manually mapping skills to jobs means you’re already behind.

If you start with skills alone, AI will feel disruptive because you’re reskilling yesterday’s workflows. Skills strategies are reactive — they respond to how work is defined today. A true organizational AI strategy is proactive. It redefines work for tomorrow.

When AI strategy sits at the center:

- Skills become directional, not just static lists.

- Learning connects to real workflow shifts.

- Mobility follows where value is being created.

Without this coherence, you get enthusiasm at the edges but confusion in the middle. With it, culture adapts, and expectations — and the intelligence driving them — become explicit.

Escaping pilot purgatory: Build AI anchors

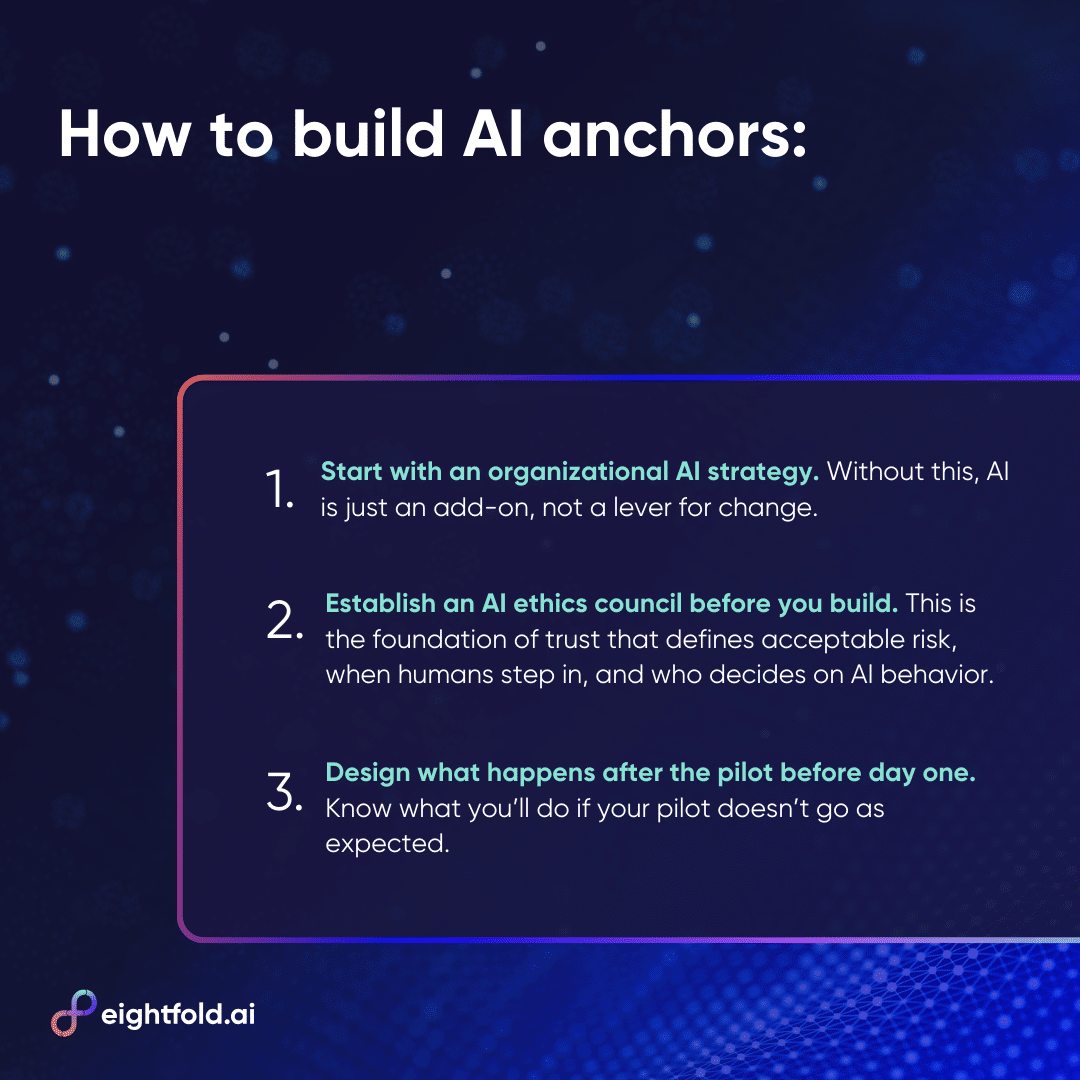

To move beyond experimentation, organizations need foundational commitments. We discussed three moves to anchor AI into the business DNA:

1. Start with an organizational AI strategy (not a tool list)

- Sit AI strategy at the center of enterprise strategy.

- Ask: Why are we using AI? Where can it fundamentally change work? Who owns the consequences?

- IT can’t own the strategy while HR manages the fallout. Leaders must revisit authority, accountability, measurement, and workflow design. If not, AI is just an add-on, not a lever for change.

2. Establish an AI ethics council before you build

- Ethical, responsible, explainable AI isn’t a “nice to have” — it’s the foundation of trust.

- A governance council defines acceptable risk, when humans must step in, and who decides on AI behavior.

- Include HR, data leaders, frontline operators, employees, and business executives. Diverse voices allow fast action without fear.

3. Design the “day after” the pilot before day one

- Leadership should align on three scenarios:

- If it works: Redesign decision authority and performance metrics immediately.

- If it sort of works: Identify whether friction is technical or organizational; fix narrative and sponsorship, not the tool.

- If it doesn’t work: Was it the use case or the change management?

A pilot without a next-step agreement isn’t an experiment — it’s avoidance. Scaling requires pre-deciding how success, partial success, and failure translate into action.

The silver bullet question

I’m often asked: what’s the silver bullet for scaling AI?

There isn’t one.

No tool or license fixes misaligned leadership or stagnant strategy. AI intensifies whatever system it enters. Aligned leadership sharpens execution. Misalignment sharpens dysfunction.

“Scaling AI means redesigning decision authority, workflow ownership, skill expectations, governance, and performance measurement. If those systems remain unchanged, AI becomes a layer, not a lever.”

Done right, AI doesn’t just enhance work — it transforms it. The organizations that succeed are the ones willing to rethink systems, clarify ownership, and embed change into the very way work happens.

AI strategy starts at the top — together. See what our research says about the CHRO/CIO alignment advantage.

Rebecca Warren is a Sr. Director of Talent-centered Transformation. Before joining Eightfold, she held multiple talent leadership roles with large CPG, agri-biz, restaurant, and retail organizations.