At Eightfold AI, we believe in leveraging AI not just in our products, but in how we build them. This is the story of how we used AI agents to tackle a critical accessibility backlog and achieve WCAG 2.2 AA compliance — a task that would have taken 6-10 months manually, completed in just two months.

The accessibility challenge

As an enterprise talent intelligence platform, accessibility isn’t optional — it’s essential. Our platform helps organizations make critical hiring and talent decisions, and it must be usable by everyone, regardless of their abilities. When we faced a backlog of accessibility issues that threatened our compliance goals, we had a choice: follow the traditional manual approach or innovate with AI.

We chose innovation.

What is accessibility and why does it matter?

Accessibility (often abbreviated as “a11y” – the “11” represents the 11 letters between “a” and “y”) refers to designing and developing products that can be used by people with disabilities. This includes:

- Visual impairments: Users who rely on screen readers or need high contrast.

- Motor disabilities: Users who navigate via keyboard instead of mouse.

- Cognitive disabilities: Users who need clear, simple interfaces.

- Hearing impairments: Users who need captions or visual indicators.

For enterprise software like Eightfold, accessibility is both a legal requirement and a business imperative. The Americans with Disabilities Act (ADA), Section 508, and similar regulations worldwide mandate that enterprise software be accessible. Beyond compliance, accessible design benefits everyone — clear navigation, keyboard shortcuts, and semantic HTML improve the experience for all users.

Understanding WCAG 2.2 AA compliance

WCAG (Web Content Accessibility Guidelines) 2.2 is the international standard for web accessibility, published by the World Wide Web Consortium (W3C).

The guidelines are organized into three levels:

- Level A: Basic accessibility (minimum requirements)

- Level AA: Standard accessibility (required for most organizations)

- Level AAA: Enhanced accessibility (optimal, but often impractical)

WCAG 2.2 AA compliance means meeting all Level A and Level AA success criteria across four principles:

- Perceivable: Information must be presentable in ways users can perceive

- Operable: Interface components must be operable by all users

- Understandable: Information and UI operation must be understandable

- Robust: Content must be robust enough for assistive technologies

Why WCAG 2.2 AA matters for enterprise software

For enterprise companies like Eightfold, WCAG 2.2 AA compliance is critical because:

- Legal compliance: Reduces risk of ADA lawsuits and regulatory penalties

- Market access: Many enterprise customers require accessibility compliance in contracts

- Talent pool: Accessible products enable organizations to hire from the full talent pool

- User experience: Accessible design principles improve usability for everyone

- Brand reputation: Demonstrates commitment to inclusion and diversity

When we assessed our platform, we discovered hundreds of accessibility issues across our React component library — missing ARIA labels, keyboard navigation gaps, insufficient color contrast, and form labeling issues. Each issue represented a barrier for users with disabilities.

The traditional approach: Why it wasn’t enough

Traditionally, fixing accessibility issues follows this manual process:

- Audit: Run automated tools (like axe-core, WAVE) or manual testing to identify issues.

- Prioritize: Categorize issues by severity and WCAG criteria.

- Ticket creation: Create JIRA tickets for each issue with screenshots and descriptions.

- Developer assignment: Assign tickets to engineers (often as “nice-to-have” tasks).

- Manual fix: Developer reads ticket, locates component, implements fix.

- Testing: Write tests, run manual accessibility testing.

- Code review: PR review focusing on both functionality and accessibility.

- Deploy: Merge and deploy.

The problem: This process is slow, expensive, and error-prone.

- Time-consuming: Each fix requires context switching, code exploration, and manual testing

- Inconsistent: Different developers implement fixes differently

- Low Priority: Accessibility often gets deprioritized against feature work

- Knowledge Gap: Not all developers are accessibility experts

- Scale: With hundreds of issues, manual fixes would take 6-10 months

We needed a better way.

Our AI-powered solution: Autonomous accessibility agents

Instead of manually fixing hundreds of issues, we built an autonomous AI agent system that can analyze, implement, test, and deploy accessibility fixes automatically.

High-level architecture

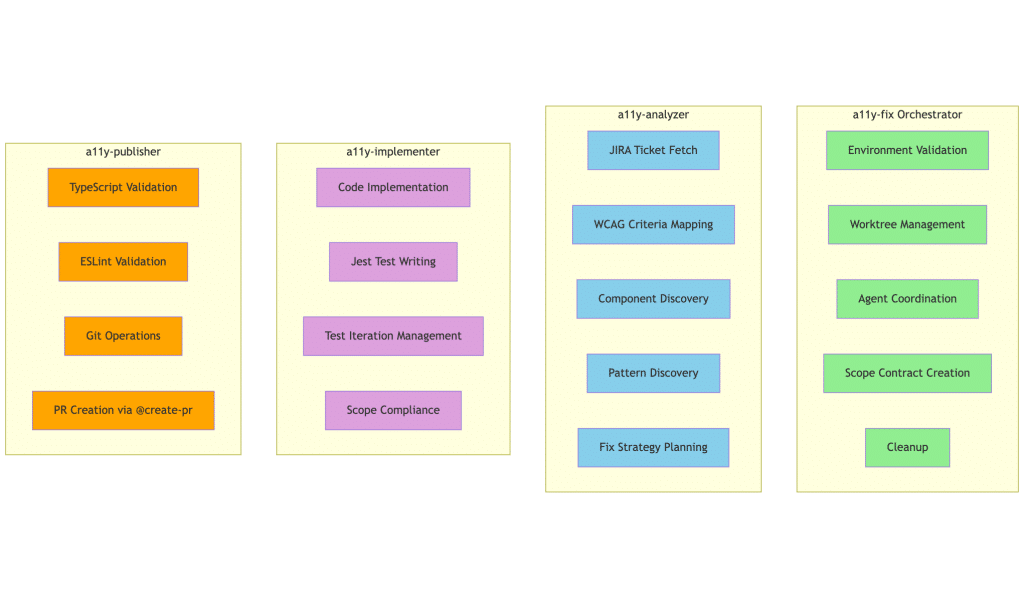

Agent responsibility matrix

Our system consists of three specialized AI agents orchestrated by a main orchestrator. This way, we have a clear separation of concerns.

Additionally, there are instructions to teach the agents about the shared understanding around different aspects such as coding patterns, discovery methods, testing patterns, troubleshooting, WCAG fundamentals and internal libraries such as octuple.

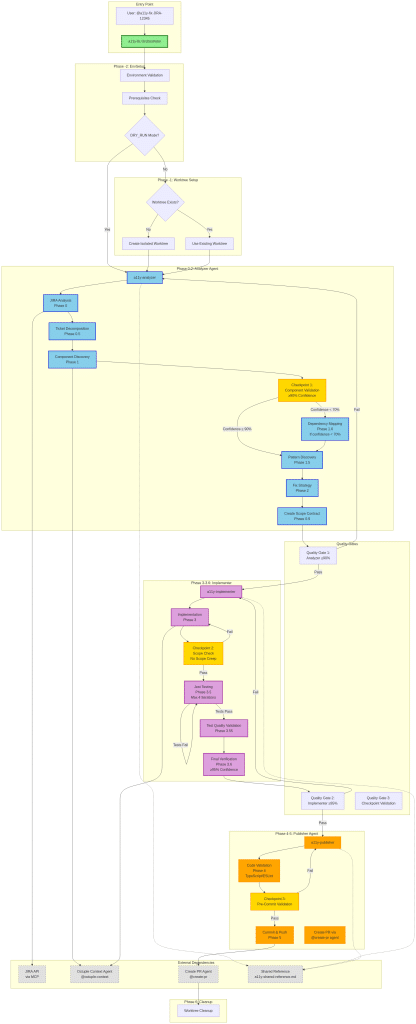

Agent orchestration

How it works: End-to-end

Step 1: Simple trigger

A developer or QA engineer creates a JIRA ticket describing an accessibility issue (e.g., “Submit button missing aria-label”). They simply comment:

@agent-a11y-fix www/react/src/components/Button/Button.tsx

Step 2: Autonomous execution

The orchestrator agent takes over:

- Environment setup: Validates prerequisites, creates isolated git worktree

- Analysis phase: The analyzer agent:

- Fetches JIRA ticket details and screenshots via API

- Maps the issue to WCAG criteria (e.g., “missing aria-label” → WCAG 4.1.2)

- Searches codebase to locate the exact component

- Discovers similar fixes in the codebase for pattern matching

- Creates a detailed fix strategy with confidence scoring

- Implementation phase: The implementer agent:

- Reads the JIRA ticket multiple times (5 checkpoints) to prevent scope creep

- Implements the fix following codebase patterns

- Writes Jest tests for the accessibility fix

- Iterates up to 4 times until tests pass

- Validates test quality and scope compliance

- Publishing phase: The publisher agent:

- Validates TypeScript and ESLint compliance

- Creates atomic commit with descriptive message

- Pushes branch and creates PR with comprehensive description

- Generates test plan for manual verification

Step 3: Human Review

The PR is ready for review, with all tests passing and validation complete. The developer reviews the changes and merges.

Key design principles

Our agent system is built on several critical principles:

- Quality over speed

- Agents read unlimited files to understand context (no artificial limits)

- Multiple confidence checkpoints (≥90% for analysis, ≥95% for implementation)

- Five JIRA re-read checkpoints to prevent hallucinations

- Scope protection

- Explicit “scope contracts” prevent fixing issues not in the ticket

- Checkpoint 2 specifically validates no scope creep

- Agents are instructed: “Fix ONLY what JIRA describes, nothing more”

- Pattern consistency

- Agents discover existing accessibility patterns in the codebase

- New fixes follow established patterns for consistency

- Leverages shared reference documentation

- Non-interactive automation

- Fully autonomous – no human input required during execution

- All operations in isolated git worktree

- Automatic cleanup on success or failure

- Modular architecture

- Each agent has a focused responsibility

- 56% context reduction per agent vs. monolithic approach

- Clear failure isolation and debugging

Technical deep dive: The agent workflow

Here’s what happens under the hood when an agent processes a ticket:

Analysis (a11y-analyzer)

- Fetches JIRA ticket via MCP (Model Context Protocol) integration

- Extracts WCAG criteria from issue description

- Searches codebase using multiple strategies:

- i18n pattern matching

- Hardcoded string search

- Semantic codebase search

- Screenshot text triangulation

- Validates component actually has the reported issue (Checkpoint 1)

- Discovers similar accessibility fixes for pattern matching

- Creates fix strategy with TypeScript patterns, test approach, complexity assessment

Implementation (a11y-implementer)

- Re-reads JIRA ticket (Checkpoint 4) to ensure focus

- Implements fix following discovered patterns

- For Octuple components (our design system), queries @octuple-context agent for exact prop names

- Writes Jest tests using React Testing Library

- Runs tests, iterates up to 4 times if failures occur

- Validates test quality (specificity, DOM validation, multiple assertions)

- Final verification against JIRA (Checkpoint 5) with ≥95% confidence

Publishing (a11y-publisher)

- Validates TypeScript compilation

- Runs ESLint with auto-fix

- Pre-commit validation (Checkpoint 3)

- Creates commit with JIRA reference

- Invokes @create-pr agent for sophisticated PR template filling

- Generates comprehensive test plan for manual QA

Integration with our toolchain

Our agents integrate seamlessly with our existing development workflow:

- JIRA: Direct API integration via MCP for ticket fetching

- Git: Isolated worktree management for safe parallel work

- GitHub: Automated PR creation with proper templates

- CI/CD: All validation runs before PR creation

- Design system: Integration with Octuple component library for prop discovery

The results: From 6-10 months to two months

Quantitative impact

Timeline compression:

- Traditional approach: 6-10 months for full backlog

- AI agent approach: 2 month for all major issues

- Speed improvement: 3-5x faster

Volume processed:

- Hundreds of accessibility issues fixed

- All fixes include automated tests

- Zero scope creep incidents

- 100% TypeScript and ESLint compliance

Quality metrics:

- Analyzer confidence: ≥90% (required threshold)

- Implementer confidence: ≥95% (required threshold)

- Test pass rate: 100% (agents iterate until passing)

- Code review time: Reduced by 60% (PRs are pre-validated)

Qualitative benefits

- Consistency: Every fix follows the same high-quality pattern. No more “developer A does it this way, developer B does it that way.”

- Knowledge transfer: The agents encode accessibility best practices. New developers learn by reviewing agent-generated PRs.

- Focus: Developers can focus on feature work while agents handle the accessibility backlog autonomously.

- Scalability: The system scales effortlessly. Need to fix 10 issues or 1000? The agents handle it the same way.

- Documentation: Every fix includes comprehensive PR descriptions, test plans, and JIRA references – creating a knowledge base of accessibility patterns.

Real-world example

Here’s what a typical agent execution looks like:

Input: JIRA ticket “ENG-12345: Submit button missing aria-label for screen readers”

Agent execution (fully autonomous):

- Analyzer locates SubmitButton.tsx component

- Discovers similar buttons with aria-label patterns

- Implementer adds aria-label={i18nUtils.gettext(“Submit form”)}

- Writes Jest test: expect(button).toHaveAttribute(‘aria-label’, ‘Submit form’)

- Tests pass on iteration 2

- Publisher validates TypeScript/ESLint

- Creates PR with description, test plan, and JIRA reference

Output: Ready-to-merge PR in ~5-10 minutes, with tests passing and all validations complete.

Human time: ~2 minutes to review and merge (vs. 30-60 minutes for manual fix).

Path forward

While one time compliance achievement in a short span of time seems like a great success story, the true value of AI will be fully realized once we integrate these agents in our SDLC to ensure no new a11y issues creep in as the product evolves. There are many areas in which these agents can evolve in future.

Future enhancements

- We need to integrate the agent in our PR process to analyze the PRs before merge to catch accessibility issues

- We can also automate accessibility testing in CI/CD pipeline, so that the UI builds are tested for accessibility issues before release. Integration with Playwright could be one approach to do this.

- Since current architecture was optimized for quality, we have not put effort in making these agents fast. The next step is to ensure that the code generation is fast enough so that integrating such agents in CI/CD does not delay our build or release cadence.

Cultural shift: AI as a teammate

Perhaps the most significant impact is cultural. Our engineers now think of AI agents as teammates that:

- Handle repetitive, well-defined tasks

- Maintain consistency across the codebase

- Encode and propagate best practices

- Scale with our engineering organization

This isn’t about replacing engineers – it’s about amplifying their impact. Engineers spend less time on routine fixes and more time on innovation, architecture, and solving complex problems.

Lessons learned

Building and deploying AI agents for accessibility fixes taught us several important lessons:

- Quality gates are essential. Our confidence thresholds (90% for analysis, 95% for implementation) and multiple checkpoints prevent low-quality outputs. AI agents need guardrails.

- Scope protection prevents drift. Explicit scope contracts and checkpoint validation ensure agents fix only what’s requested. Without this, agents tend to “improve” things beyond scope.

- Modularity enables debugging. Breaking the system into focused agents (analyzer, implementer, publisher) makes it easier to debug failures and improve individual components.

- Pattern discovery is powerful. Agents that discover and follow existing codebase patterns produce more consistent, maintainable code than agents that invent new patterns.

- Human-in-the-loop still matters. While agents are autonomous, human review of PRs ensures business context and catches edge cases. The best systems combine AI automation with human judgment.

Conclusion

Achieving WCAG 2.2 AA compliance in a couple of months instead of 6-10 months demonstrates the transformative power of AI-led engineering. By treating AI agents as autonomous teammates that handle well-defined, repetitive tasks, we’ve:

- ✅ Achieved compliance faster than thought possible

- ✅ Maintained high code quality and consistency

- ✅ Freed engineers to focus on innovation

- ✅ Created a scalable system for ongoing compliance

- ✅ Established a foundation for AI-led engineering culture

At Eightfold, we’re not just building AI products — we’re building with AI. This accessibility agent system is just the beginning. As we continue to integrate AI into our development lifecycle, we’re creating an engineering organization that’s faster, more consistent, and more capable than ever before.

The future of software engineering isn’t just about writing code, it’s about orchestrating intelligent systems that amplify human creativity and expertise.

And we’re building that future, one agent at a time. The future really works here.

Read more technical deep-dives on our Eightfold Engineering blog and explore open roles on our team.