There’s a version of the AI fairness conversation that treats the algorithm as the whole problem. Get the algorithm right, the thinking goes, and you get fair outcomes. But this framing misses something fundamental: an algorithm is only as fair as the data it learned from.

AI doesn’t invent bias from scratch. It learns patterns from historical data. And if that historical data reflects decades of biased hiring decisions — if it over-represents certain demographics in senior roles, under-represents others in technical ones, or encodes socioeconomic signals that correlate with protected categories — the model will learn those patterns. Not because it’s trying to discriminate but because that’s what the data taught it.

This is a more widespread problem than most organizations realize. Legacy platforms trained only on your internal, historical data don’t just inherit your past decisions — they amplify them, surfacing the same patterns with higher confidence and less visibility into why. Speed doesn’t fix bias. It compounds it.

Our responsible AI begins with a deliberate, ongoing commitment to what goes into the Talent Intelligence Platform before training starts. This post covers what that looks like in practice.

CEO and Co-Founder Ashutosh Garg discussing the importance of responsible AI at Eightfold.

Why historical data is a problem

The intuition behind AI hiring tools is straightforward: analyze patterns in what successful candidates look like, and use that to identify future candidates with similar profiles. The challenge is that “successful” in historical data often means “hired and retained under past conditions” — conditions that may have included significant bias.

If a technology organization’s historical hiring data shows that 80% of senior engineers who advanced to leadership were men, a model trained on that data may learn to weight features that correlate with male candidates more highly — not because those features are genuinely predictive of success, but because they correlate with a historical pattern that itself reflected bias.

This is the core challenge: the data doesn’t label itself as biased. It just looks like signal.

A model trained exclusively on one organization’s internal data can only learn from that organization’s decisions — including its worst ones. It becomes a system that doesn’t predict who will succeed. It predicts who has historically been allowed to.

Our approach to this challenge is grounded in a clear principle: models should learn the qualifications of successful individuals, not their identity. The Talent Intelligence Platform is trained on billions of global career trajectories — the world’s most complete map of how human potential actually moves — rather than on any single organization’s limited, internally skewed history. The engineering challenge is making that principle operational at scale.

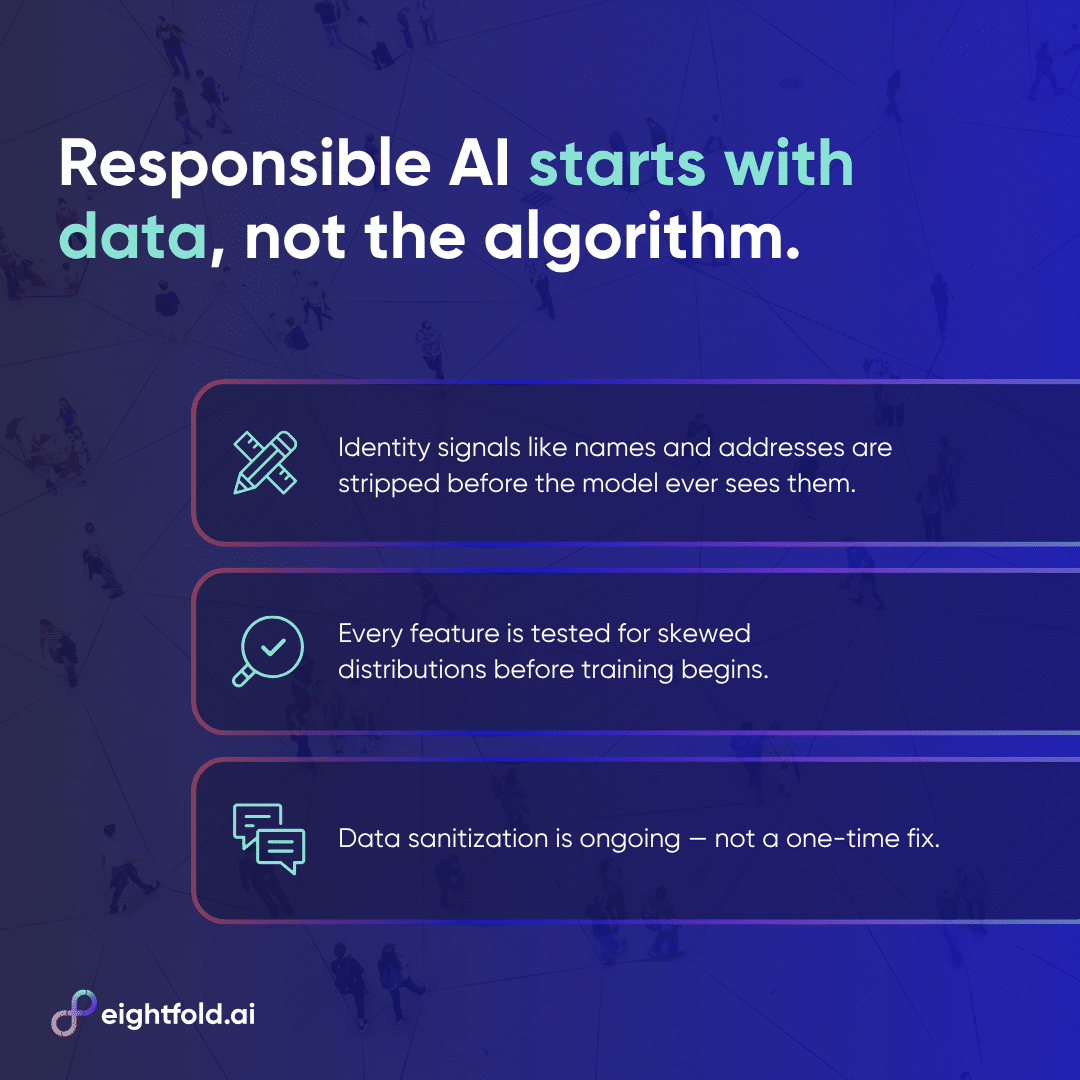

Masked features: removing identity from the training signal

The first line of defense is stripping identity information from the data before it reaches the model. For our Talent Intelligence Platform, input data is cleaned of names, contact information, and address information — fields that don’t speak to a candidate’s qualifications but can serve as proxies for protected categories.

Names can imply gender and ethnicity. Addresses can encode socioeconomic background and, in some geographies, race. Email addresses may contain names. None of this information is relevant to whether a candidate can do a job — but a model exposed to it might learn to weight it anyway, if it happens to correlate with outcomes in the training data.

Removing these features reduces the model’s ability to explicitly incorporate protected category information into its scoring. But this is harder than it sounds. Resumes come in an enormous variety of formats, and every unusual format is an opportunity for masking to fail.

A name embedded in an unusual position, a photo included in a non-standard way, a detail that implies demographic information indirectly — these edge cases require ongoing vigilance.

That’s why we treat feature masking not as a solved problem but as an evolving one, and why it’s explicitly understood as one layer of a broader defense rather than a complete solution.

Feature distribution analysis: vetting what goes in

Beyond removing identity signals, responsible data practice requires understanding what each feature actually represents — and whether it behaves the way it should.

Before any feature is used in model training, our team establishes a hypothesis: what should this feature measure? How should its values be distributed across the candidate population? What would it look like if the feature were working as intended, and what would it look like if something had gone wrong?

These hypotheses are then tested against actual feature distributions before training begins. A feature that shows unexpected clustering, an asymmetric distribution, or a pattern that suggests it’s encoding something other than what it’s supposed to measure gets flagged for review.

This matters for fairness in a specific way: features with skewed distributions can inadvertently encode protected category information. If a feature that’s supposed to measure “years of relevant experience” turns out to have systematically different distributions across gender groups — because of how experience has historically been accumulated and described differently across those groups — it may function as a proxy for gender even if gender was never intended to be part of the model’s decision-making.

Catching these issues before training, rather than after, is far more effective than trying to correct for them post-hoc.

Data sanitization as a practice, not a project

One of the most important things to understand about data fairness is that it’s not a one-time exercise. The data landscape changes. New job titles emerge. Industries shift. The workforce composition of certain roles evolves. Language used in resumes changes over time. What counts as an appropriate training signal today may look different in two years.

Our approach reflects this reality. Data sanitization processes are revisited and updated as the world changes. What’s considered a good feature is reassessed. New potential proxies for protected categories are identified and addressed.

This is one of the less visible aspects of responsible AI, but it’s among the most important. A commitment to fair data that doesn’t include ongoing maintenance is a commitment that degrades over time.

Fairness as the foundation

With the right data practices in place, the Talent Intelligence Platform has the best possible foundation for fair outcomes. But data quality is necessary, not sufficient — and that framing understates the ambition.

The goal isn’t to minimize bias as a liability. It’s to build a system where fairness isn’t a feature that gets added or toggled. It’s structural. Every decision, whether evaluating one candidate or one million, is held to the same standard. Not fairness as a feature. Fairness as the foundation.

That standard extends into how the models themselves are built and tested. The training process — the algorithms chosen, the evaluation criteria applied, the checks run before a model is released — introduces its own set of fairness considerations that data quality alone can’t address.

In the next post, we go inside that process: the specific metrics we use to measure fairness during training, how early stopping prevents bias from being learned in the first place, and why no single metric is sufficient to define what it means for a model to be fair.

Learn more about responsible AI at Eightfold — download the whitepaper.