Responsible AI doesn’t end at launch. That’s not a caveat — it’s the point.

A model that performs equitably at release can drift over time. The data distributions it encounters in production may differ from training conditions. New use cases surface edge cases the original evaluation didn’t anticipate. And without active monitoring, none of this is visible until something has already gone wrong.

This reality is increasingly reflected in the regulatory environment. The EEOC’s AI and Algorithmic Fairness Initiative places employers on notice that AI-powered hiring tools can trigger civil rights liability when they produce disparate outcomes. New York City’s Automated Employment Decision Tools law requires any employer using AI-assisted hiring to complete an independent bias audit before deployment — and to repeat it annually.

These aren’t the reasons we built a governance framework. They’re confirmation that the field is catching up to what responsible AI practice has always required. The fourth pillar of our responsible AI framework is governance — the ongoing infrastructure that keeps commitments to fairness from degrading after a model ships.

CEO and Co-Founder Ashutosh Garg discussing the importance of responsible AI at Eightfold.

The gap between model evaluation and real-world outcomes

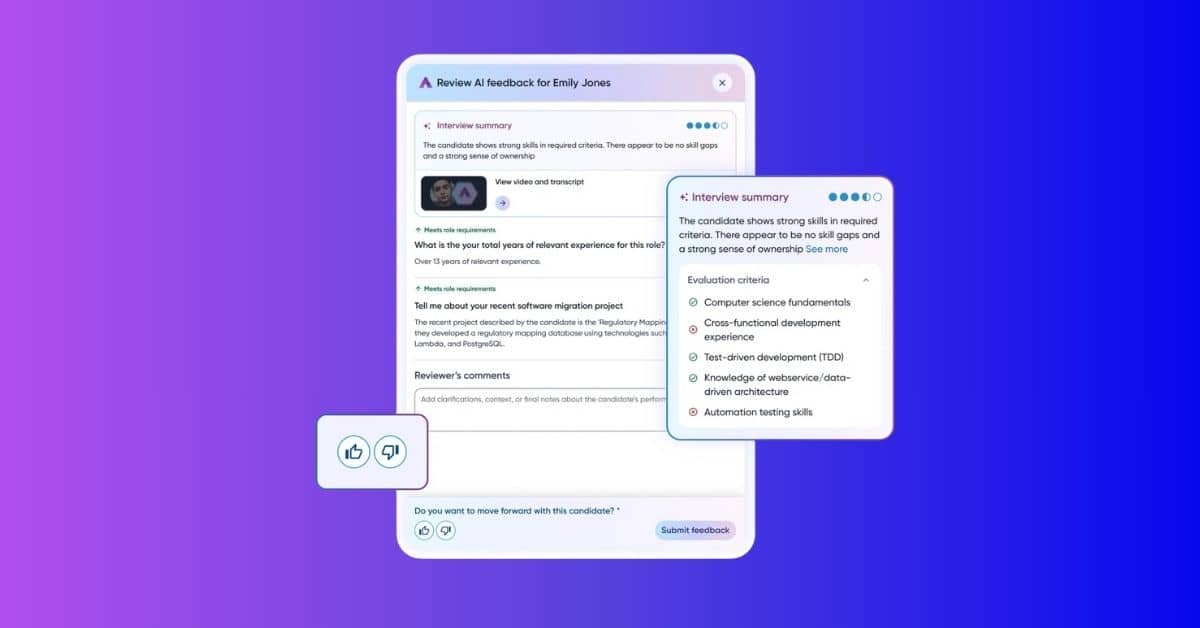

Model evaluation frameworks — the metrics described in the previous post — answer a specific question: does the Talent Intelligence Platform perform consistently across demographic subgroups? That’s an essential question. But it’s not the only one that matters.

A model that performs equally well across groups can still produce unequal real-world outcomes if the underlying data is biased. If women are systematically underrepresented in the pool of “successful hires” in a training dataset because of historical barriers, a model that learns from that data will generate recommendations that reflect those historical patterns — even if the model itself shows no differential performance by gender.

Adverse impact analysis addresses this complementary question: does the use of this tool result in disparate outcomes across groups in practice? This is the kind of analysis that employment law has applied to human hiring decisions for decades, and it’s the framework we apply to our AI-assisted hiring tools.

Adverse impact analysis: bridging AI and employment law

Adverse impact analysis examines selection rates across demographic subgroups and asks whether the differences are large enough to indicate discrimination — intentional or otherwise.

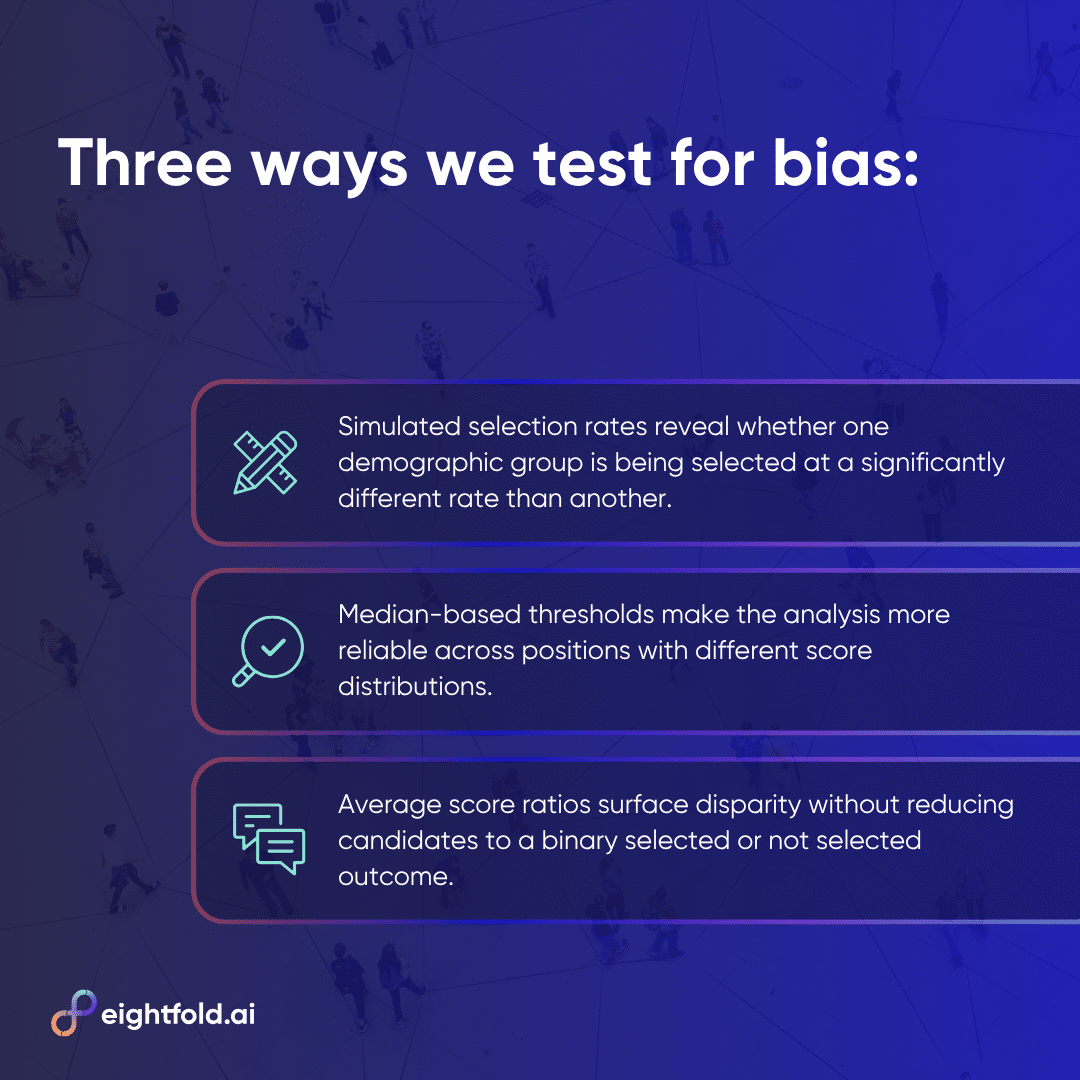

We use three complementary approaches, each suited to different data conditions.

- The first uses simulated selection rates based on a cutoff threshold. For any given position, candidates above the threshold are considered “selected,” and selection rates are calculated for each demographic subgroup. Statistical tests then evaluate whether observed differences reflect genuine disparity or sampling variation.

- The second uses the median score in the dataset as the threshold rather than a predetermined cutoff — making the analysis more robust to score distribution differences across positions and datasets.

- The third examines average scores across groups directly, rather than binary selected/not-selected classification, calculating the ratio between average scores for different subgroups. Ratios close to 1.0 indicate similar treatment across groups.

Each approach surfaces different aspects of potential disparity, and each has limitations that make it insufficient on its own.

Why no single statistical test is enough

The history of adverse impact analysis in employment law has produced a rich literature on the strengths and weaknesses of different statistical tests — and that literature has direct relevance to AI systems operating at scale.

The Z-test measures whether differences in selection rates between subgroups are statistically significant. It works well at moderate sample sizes. At very large sample sizes — the kind the Talent Intelligence Platform routinely encounters — it becomes an unreliable indicator of meaningful bias. A difference of just 1% in selection rates can become highly statistically significant at millions of applications, even when that difference has no practical significance for the candidates involved.

The 4/5ths Rule addresses this by measuring practical significance independent of sample size. A selection rate ratio below 0.8 or above 1.25 indicates potentially significant adverse impact, regardless of statistical significance. This scale-independence makes it particularly useful at large sample sizes. At small sample sizes, however, a single additional selection can flip the result — making it unreliable without supplementary safeguards like the “flip flop” test, which checks whether a result changes if one selection is transferred from the advantaged to the disadvantaged group.

Fisher’s Exact Test is the preferred tool when sample sizes are small and the statistical assumptions of the Z-test don’t hold. It calculates the exact probability of observing a given selection pattern under the null hypothesis of no discrimination, without relying on approximations. Its limitation is computational: at large sample sizes, the factorial calculations involved can become prohibitively expensive.

Our adverse impact analysis framework applies all three approaches where each is most reliable — statistical significance tests for moderate samples, the 4/5ths rule for large-scale data, Fisher’s Exact Test for small samples. The goal is a comprehensive picture that no single test can provide.

Perturbation testing: fairness at the individual level

Adverse impact analysis provides a population-level view: across all candidates scored for a given position, are outcomes distributed equitably? Perturbation testing provides an individual-level view: for a specific candidate, does their score change if résumé details implying a different demographic group are substituted?

In perturbation testing, we created pairs of résumés — an original and a modified version in which signals like names, which can imply gender or ethnicity, are changed to imply a candidate from a different demographic group. Match scores for both versions are then compared using an independent samples t-test.

The Talent Intelligence Platform should produce scores that are statistically indistinguishable between the original and modified résumés. The qualifications haven’t changed. The candidate’s skills, experience, and fit for the role are identical. If scores diverge significantly, that’s evidence the model is treating demographic signals as relevant features — which they should not be.

A low t-score and high p-value on perturbation tests indicates that match scores are not statistically sensitive to the demographic signals embedded in names. This is one of the most direct tests of whether bias has crept into model scoring at the individual level — and it maps directly to the standard every candidate deserves: evaluated on what they can do, not who they are.

External audits: accountability beyond internal testing

Internal testing — however rigorous — has inherent limitations as an accountability mechanism. The same team that builds a system is not ideally positioned to objectively assess its fairness. Internal incentives, shared assumptions, and familiarity with the system all create blind spots.

External bias audits address this by bringing independent perspective to the evaluation process. Third-party auditors examine the Talent Intelligence Platform against objective fairness standards, provide findings to stakeholders and customers, and create a public record of accountability. For customers in jurisdictions like New York City subject to mandatory bias audit requirements, this also ensures compliance with applicable law.

Beyond compliance, external audits serve a trust function. Customers and candidates who interact with AI-assisted hiring systems can’t evaluate those systems themselves. An independent audit — conducted by credentialed experts under a defined methodology — provides the kind of objective assurance that internal claims of fairness cannot substitute for. It’s part of why the Talent Intelligence Platform holds FedRAMP Moderate and ISO 42001 certifications that general-purpose AI tools cannot meet.

Active monitoring: keeping fairness commitments after launch

The final component of our governance framework is continuous monitoring — the infrastructure that ensures fairness commitments made at launch are maintained over time.

Latency and accuracy metrics are tracked on a live dashboard, monitored on a regular cadence by the engineering team. Automated alarms trigger when metrics cross predetermined thresholds, prompting immediate investigation and response. This treats model drift — including fairness drift — as an operational concern, not an annual review item.

We also maintain continuously growing “golden datasets” curated through a human-in-the-loop process. Models in production are regularly evaluated against these datasets to catch performance changes that may not be visible in aggregate metrics.

One specific standard: match score probability distributions across positions should maintain a stable trend over time. A drifting distribution is an early warning sign that something in the model’s behavior has changed.

The combination of automated monitoring, regular human review, and structured golden dataset evaluation creates multiple overlapping detection mechanisms — so that issues are identified early, before they’ve had time to compound into significant real-world impact.

Fairness as the foundation

The four pillars covered in this series — right products, right data, right algorithms, and right governance — represent a single, continuous commitment: that every candidate deserves the same quality of evaluation, the same standard, the same bar. Not just the candidates who apply early. Not just the candidates in the largest demographic groups. Every candidate.

AI fairness isn’t something you achieve once. It’s something you maintain. The regulatory environment is evolving. The research is evolving. The data distributions models encounter in the real world are evolving. An approach to responsible AI that doesn’t evolve with them will produce systems that become less fair over time, even without any intentional decision to change anything.

For HR leaders and talent acquisition professionals evaluating AI tools, this series offers a framework for the questions worth asking. Not just “what did you do before launch?” but “what happens after?” Not just “does your model show equal accuracy?” but “do outcomes look equitable in practice?” Not just “have you been audited?” but “how often, by whom, and with what methodology?”

The answers to those questions separate AI built with genuine accountability from AI that treats fairness as a checkbox.

Not fairness as a feature. Fairness as the foundation.

Learn more about responsible AI at Eightfold — download the whitepaper.